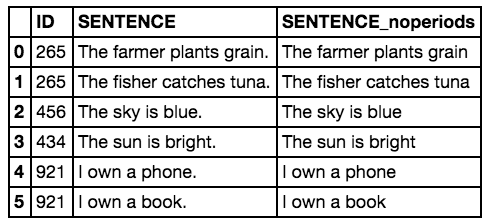

Pandas provide a method to split string around a passed separator/delimiter. After that, the string can be stored as a list in a series or it can also be used to. I am trying to convert a Pandas DF containing sentences into one which shows the number of words in those sentences across all columns and rows. I have tried apply, transform, lambda functions.

For this post, I want to describe a text analytics and visualization technique using a basic keyword extraction mechanism using nothing but a word counter to find the top 3 keywords from a corpus of articles that I’ve created from my blog at To create this corpus, I downloaded all of my blog posts (1400 of them) and grabbed the text of each post. Then, I tokenize the post using nltk and various stemming / lemmatization techniques, count the keywords and take the top 3 keywords. I then aggregate all keywords from all posts to create a visualization using.I’ve uploaded a. You can also get a subset of my blog articles in a csv file. You’ll need beautifulsoup and nltk installed. You can install them with. # From tokenizer(text):tokens = wordtokenize(sent) for sent in senttokenize(text)tokens = for tokenbysent in tokens:tokens += tokenbysenttokens = list(filter(lambda t: t.lower not in stop, tokens))tokens = list(filter(lambda t: t not in punctuation, tokens))tokens = list(filter(lambda t: t not in u's', u'n't', u'.'

, u', u'``', u'u2014', u'u2026', u'u2013', tokens))filteredtokens = for token in tokens:token = wnl.lemmatize(token)if re.search('a-zA-Z', token):filteredtokens.append(token)filteredtokens = list(map(lambda token: token.lower, filteredtokens))return filteredtokens. Def buildarticledf(urls):articles = for index, row in urls.iterrows:try:data=row'text'.strip.replace(', ')data = striptags(data)soup = BeautifulSoup(data)data = soup.gettextdata = data.encode('ascii', 'ignore').decode('ascii')document = tokenizer(data)top5 = getkeywords(document, 5)unzipped = zip(.top5)kw= list(unzipped0)kw=','.join(str(x) for x in kw)articles.append((kw, row'title', row'pubdate'))except Exception as e:print e#print data#breakpass#breakarticledf = pd.DataFrame(articles, columns='keywords', 'title', 'pubdate')return articledf. Articledf = buildarticledf ( datadf )This gives us a new dataframe with the top 3 keywords for each article (along with the pubdate and title of the article).This is quite cool by itself. We’ve generated keywords for each article automatically using a simple counter. Not terribly sophisticated but it works and works well.

There are many other ways to do this, but for now we’ll stick with this one. Beyond just having the keywords, it might be interesting to see how these keywords are ‘connected’ with each other and with other keywords. For example, how many times does ‘data’ shows up in other articles?There are multiple ways to answer this question, but one way is by visualizing the keywords in a topology / network map to see the connections between keywords. We need to do a ‘count’ of our keywords and then build a co-occurrence matrix. This matrix is what we can then import into Gephi to visualize. We could draw the network map using networkx, but it tends to be tough to get something useful from that without a lot of workusing Gephi is much more user friendly.We have our keywords and need a co-occurance matrix. To get there, we need to take a few steps to get our keywords broken out individually.

Tocsv ( 'out/ericbrownco-occurancymatrix.csv' )Over to GephiNow, its time to play around in Gephi. I’m a novice in the tool so can’t really give you much in the way of a tutorial, but I can tell you the steps you need to take to build a network map. First, import your co-occuance matrix csv file using File - Import Spreadsheet and just leave everything at the default. Then, in the ‘overview’ tab, you should see a bunch of nodes and connections like the image below. Network map of a subset of ericbrown.com articlesNext, move down to the ‘layout’ section and select the Fruchterman Reingold layout and push ‘run’ to get the map to redraw.

At some point, you’ll need to press ‘stop’ after the nodes settle down on the screen. You should see something like the below. Network map of a subset of ericbrown.com articlesCool, huh? Nowlet’s get some color into this graph. In the ‘appearance’ section, select ‘nodes’ and then ‘ranking’.

Select “Degree’ and hit ‘apply’. You should see the network graph change and now have some color associated with it. You can play around with the colors if you want but the default color scheme should look something like the following:Still not quite interesting though. Where’s the text/keywords? Wellyou need to swtich over to the ‘overview’ tab to see that.

You should see something like the following (after selecting ‘Default Curved’ in the drop-down.Now that’s pretty cool. You can see two very distinct areas of interest here. “Data’ and “Canon”which makes sense since I write a lot about data and share a lot of my photography (taken with a Canon camera).Here’s a full map of all 1400 of my articles if you are interested. Again, there are two main clusters around photography and data but there’s also another large cluster around ‘business’, ‘people’ and ‘cio’, which fits with what most of my writing has been about over the years.There are a number of other ways to visualize text analytics. I’m planning a few additional posts to talk about some of the more interesting approaches that I’ve used and run across recently. Stay tuned.If you want to learn more about Text analytics, check out these books:Posted in, Tagged, Search for: Sign up for our newsletter. Data analytics.

Data science. Data processing. Predictive Analytics.Regardless of what needs to be done or what you call the activity, the first thing you need to now is “how” to analyze data. You also need to have a tool set for analyzing data.If you work for a large company, you may have a full blown big data suite of tools and systems to assist in your analytics work. Otherwise, you may have nothing but excel and open source tools to perform your analytics activities.This post and this site is for those of you who don’t have the ‘big data’ systems and suites available to you. On this site, we’ll be talking about using python for data analytics. I started this blog as a place for me write about working with python for my various data analytics projects.